Something fundamental is changing in how autonomous AI systems are built, deployed, and secured. And it’s not about bigger models, better prompts, or cheaper API calls. It’s about locally hosted models, SLMs and where the trust boundary lives.

For the past three years, the dominant approach to making AI agents “safe” has been to tell the model what not to do: system prompts, content filters, output classifiers, guardrail frameworks. These operate at what I’ll call the suggestion layer: instructions to a probabilistic system that we hope it follows. The emerging pattern replaces hope with enforcement, moving the trust boundary from the prompt down to the operating system kernel.

This isn’t a theoretical shift. Projects like NVIDIA’s OpenShell, E2B, and gVisor are packaging kernel-level containment (Linux technologies like Landlock, seccomp-bpf, and network namespace isolation) into runtimes purpose-built for AI agents. And the implications for small and mid-sized businesses are more significant than most people realize.

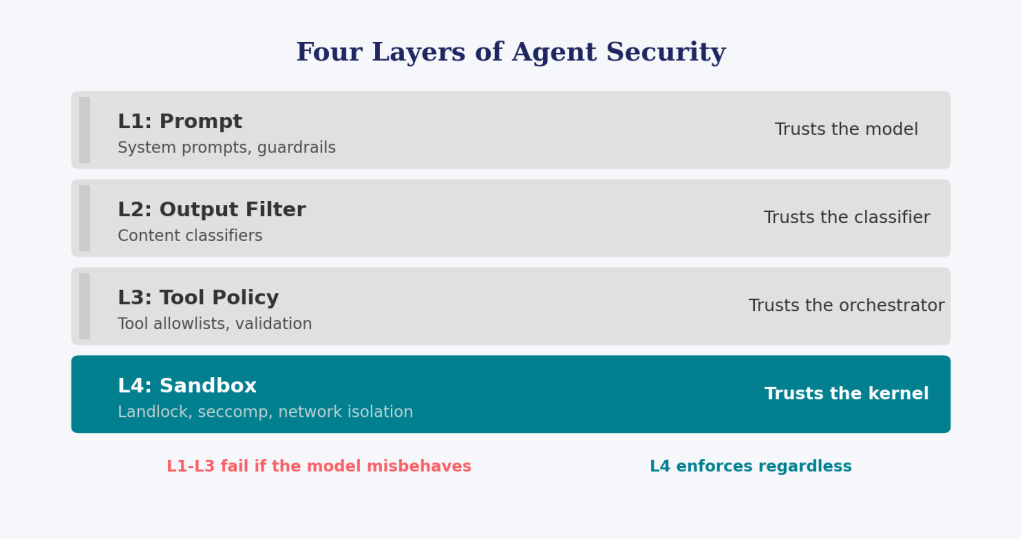

The Four Layers of Agent Security

To understand why this matters, consider the security stack that’s emerging for autonomous AI agents:

Layers 1 through 3 all share a common assumption: the model will behave as instructed. They are suggestions (sophisticated, well-engineered suggestions) but suggestions nonetheless. A jailbroken model, a hallucinated tool call, or an unanticipated prompt injection can bypass all three.

Layer 4 is different. seccomp doesn’t ask the model whether it intended to open a network socket. It blocks the system call at the kernel level, regardless of what the model thinks it’s doing. Landlock doesn’t negotiate filesystem access. It enforces it. The model’s intent is irrelevant.

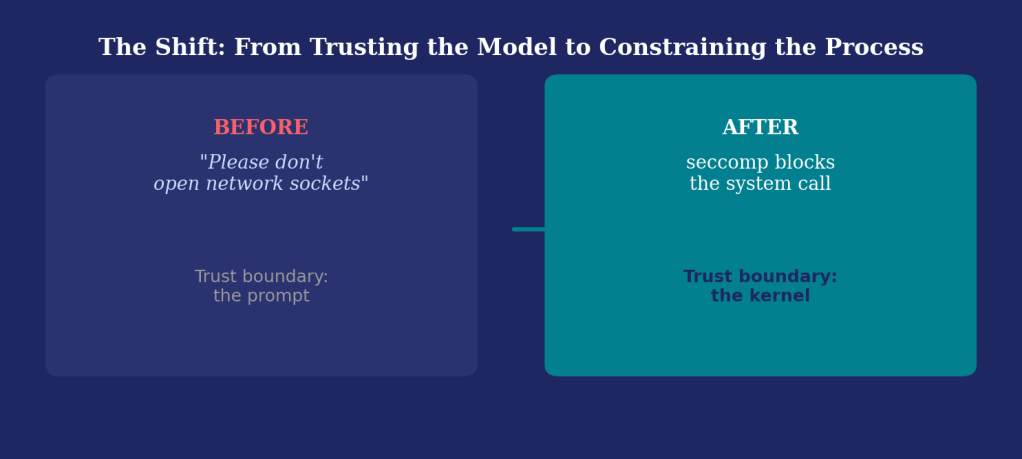

This is the shift: from trusting the model to constraining the process.

Why Prompt Guardrails Aren’t Enough

Let’s be specific about what prompt-level protections can and cannot do.

What they handle well:

- Refusing obviously harmful requests (“write me malware”)

- Steering model behavior within known parameters (“respond only in JSON”)

- Filtering sensitive content from outputs

- Reducing hallucination risk through structured schemas

What they fundamentally cannot do:

- Prevent a confused or jailbroken model from executing

rm -rf /once it has shell access - Stop data exfiltration through DNS queries, HTTP callbacks, or encoding tricks

- Contain a tool-calling loop that escalates privileges through a chain of individually-permitted actions

- Enforce least-privilege on filesystem or network access

- Guarantee compliance, because the model is probabilistic, not deterministic

The core problem is simple: prompt instructions are suggestions to a stochastic system. The moment you give an agent real tools (code execution, file I/O, network access, database writes), you need a deterministic enforcement layer that operates below the model. The model will occasionally do something you didn’t anticipate. The question is whether your security posture accounts for that inevitability or pretends it away.

This doesn’t mean prompt guardrails are useless. They’re the seatbelt. But if you’re letting the agent drive autonomously, you also need the crash cage.

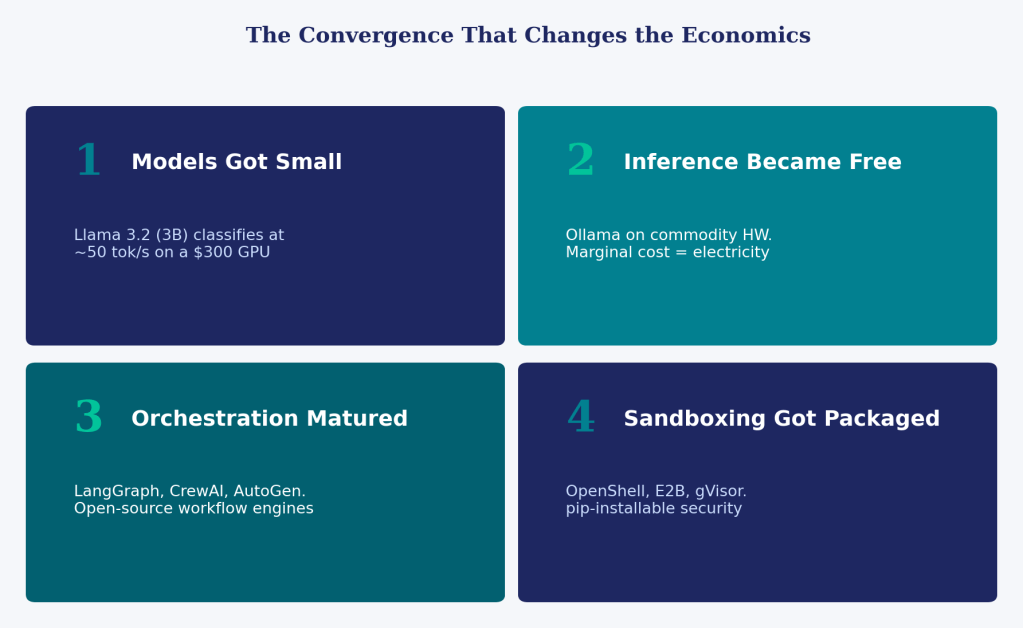

The Convergence That Changes the Economics

The sandbox-runtime pattern isn’t new in concept. Containers and VMs have isolated workloads for decades. What’s new is the convergence of four trends that make this accessible beyond well-funded enterprises:

1. Models got small enough. Llama 3.2 at 3 billion parameters classifies documents at ~50 tokens/second on a consumer GPU. Qwen 2.5 at 7 billion parameters extracts structured JSON with accuracy that would have required GPT-4 two years ago. The capability threshold for useful work has dropped below the hardware threshold for local deployment.

2. Inference became free at the margin. Ollama and LMStudio run on commodity hardware. No API keys, no per-token billing, no data leaving the premises. The marginal cost of processing document number one million and one is electricity. For workloads with predictable volume (invoice processing, contract review, receipt classification), the total cost of ownership collapses.

3. Orchestration frameworks matured. LangGraph, CrewAI, and AutoGen brought workflow orchestration patterns (prompt chaining, routing, parallel execution, orchestrator-worker delegation) into open-source libraries that a single developer can deploy. These were enterprise consulting engagements eighteen months ago.

4. Sandboxing got packaged. OpenShell bundles Landlock, seccomp-bpf, and network isolation into a pip-installable runtime. E2B open-sourced their sandbox infrastructure. gVisor provides a user-space kernel. The security engineering that previously required a dedicated team is becoming a configuration choice.

What This Means for Small and Mid-Sized Businesses

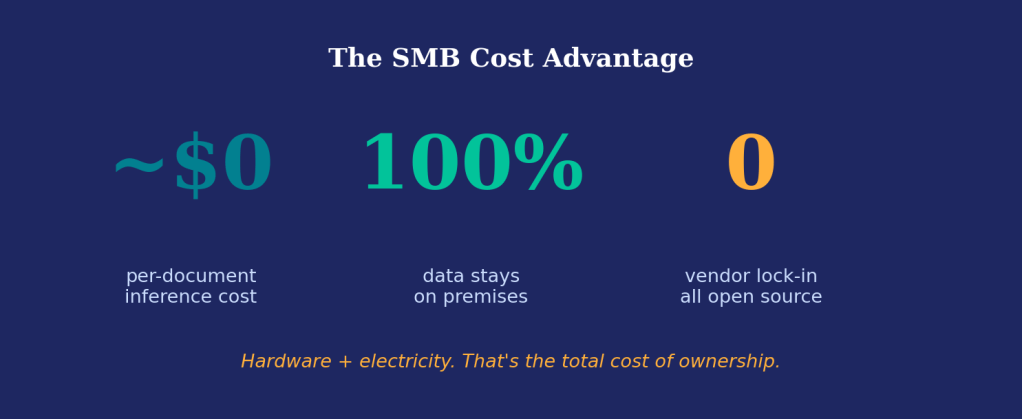

Here’s the argument in its starkest form: an accounting firm with fifteen employees can now run a document intelligence pipeline (classify invoices, extract line items, validate against purchase orders, flag anomalies) on a single workstation with a mid-range GPU. With a sandboxed runtime, they can let the agent execute code to generate reports and run calculations, safely contained. The total cost is hardware plus electricity.

That same capability, delivered through cloud AI APIs with enterprise security controls, would cost tens of thousands annually in API fees alone, before factoring in the compliance overhead of sending financial documents to third-party inference endpoints.

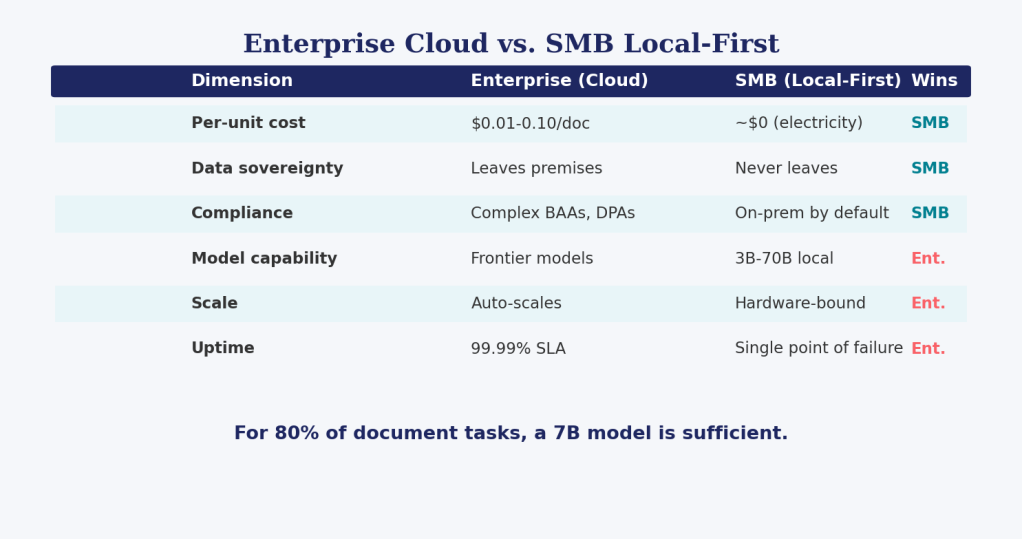

But the claim requires nuance. The local-first sandboxed pattern doesn’t give SMBs “the same thing” as enterprise deployments. It gives them something different, and in several dimensions, something better:

The critical insight: for the vast majority of document processing, classification, extraction, and validation tasks that SMBs actually encounter, a 7-billion-parameter model is sufficient. You don’t need a frontier model to classify an invoice versus a receipt. You don’t need 200 billion parameters to extract a vendor name and line item amounts. The frontier models’ advantage lives in reasoning over ambiguous, novel scenarios, the last 20% of complexity that most small businesses don’t encounter in their operational workflows.

The Autonomy Spectrum: Not Everything Needs a Sandbox

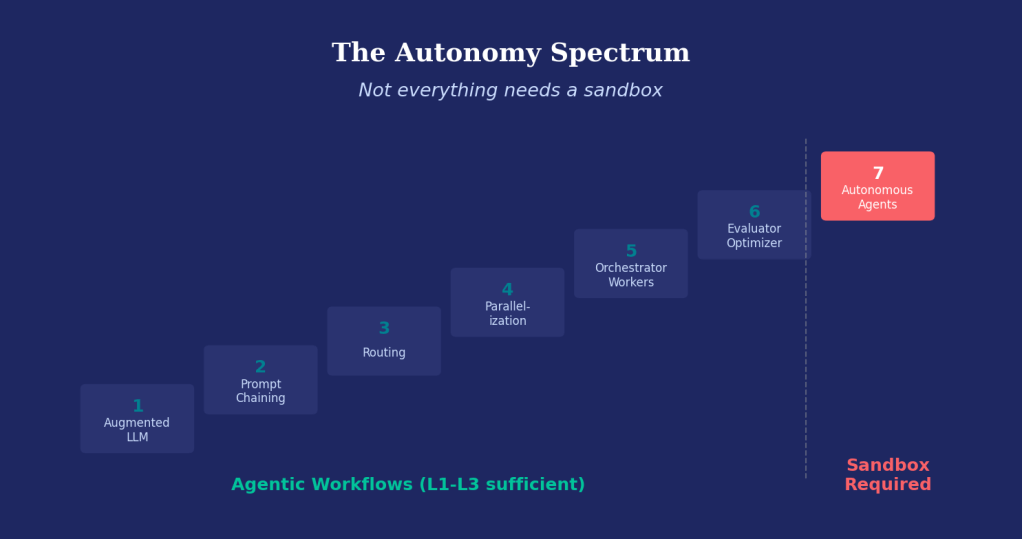

An important caveat: sandboxing is not universally necessary. The need scales directly with the agent’s degree of autonomy.

Anthropic’s complexity ladder provides a useful framework:

- Augmented LLM: single model call with tools

- Prompt chaining: sequential calls, fixed order

- Routing: model picks a branch, but branches are predefined

- Parallelization: multiple calls simultaneously

- Orchestrator-workers: one model delegates to others

- Evaluator-optimizer: loop with quality check

- Autonomous agents: open-ended reason-act loop

Levels 1 through 6 are agentic workflows: the developer controls the graph topology, the model fills in decisions at specific nodes. The sequence of steps is deterministic even though the content at each step is not. For these patterns, prompt guardrails (L1), output filtering (L2), and tool policies (L3) are generally sufficient because the model never decides what to execute, only what to generate within a constrained slot.

Level 7 is the autonomous agent: the model decides both what to do and when to stop. It operates in a reason-act-observe loop, selecting tools and determining next steps dynamically. This is where sandbox containment becomes essential, because the model is making execution decisions that the developer didn’t explicitly enumerate.

For an SMB running a fixed document processing pipeline (parse, classify, extract, validate, route), the workflow pattern (levels 2–4) delivers full value without sandbox overhead. The graph topology is hardcoded. The model classifies and extracts but never chooses what code to run.

For that same SMB wanting to add autonomous financial reconciliation, where the agent investigates discrepancies, queries multiple data sources, generates and executes analysis code, and proposes corrective actions, sandbox containment transforms a risky experiment into a deployable system.

The Honest Pros and Cons

In favor of the local sandboxed pattern:

- Zero marginal inference cost eliminates the per-document fee that makes cloud AI prohibitive at scale for small businesses

- Data never leaves the premises, which simplifies compliance with privacy regulations and eliminates an entire category of data breach risk

- Kernel-level containment provides security guarantees that are mathematically independent of model behavior. The sandbox works whether the model is cooperating or compromised

- No vendor lock-in: the stack is Ollama + LangGraph + PostgreSQL + OpenShell, all open source

- Capability floor is rising: each generation of open models closes the gap with frontier models for structured tasks

Against, or at least requiring honest assessment:

- Operational burden is real. “Free inference” stops being free when your one IT person spends two days debugging why a Landlock policy broke after a kernel update. SMBs often don’t have that person.

- Model evaluation is ongoing work. Ollama makes pulling a new model trivial, but knowing whether Llama 3.3 performs better than 3.2 on your specific documents requires testing infrastructure most small businesses won’t build.

- The stack isn’t turnkey yet. Setting up LangGraph + Ollama + OpenShell + PostgreSQL requires a developer who understands the full stack. The tools exist; the packaged, deploy-in-an-afternoon solution for non-technical teams doesn’t — yet.

- Single point of failure. A workstation under a desk doesn’t have the redundancy of a cloud deployment. Hardware failures mean downtime.

- Sandbox ≠ correctness. A sandbox prevents the agent from damaging your system. It does not prevent the agent from confidently extracting the wrong amount from an invoice and routing it into your accounting system. Human review workflows remain essential for high-stakes outputs.

- Latency overhead. Sandbox startup adds 50 to 500 milliseconds per execution context. For real-time conversational agents, that’s noticeable. For batch document processing, it’s irrelevant.

- Frontier model gap persists for complex reasoning. For ambiguous contracts, nuanced financial analysis, or multi-step reasoning over novel scenarios, local 7B models still lag behind frontier cloud models. The gap is narrowing, but it exists.

What This Means Going Forward

The pattern I’m describing (local models, workflow orchestration, kernel-level sandboxing, packaged for deployment) isn’t speculative. The components exist today. What’s missing is the integration layer: solutions that bundle these capabilities into something an SMB can deploy without hiring a machine learning engineer.

This is the opportunity space. Not building another model, not building another cloud API wrapper, but packaging the local-first sandboxed agent pattern into vertical solutions (document intelligence for accounting firms, contract review for legal practices, inventory classification for distributors) that deliver enterprise-grade capability at a fundamentally different cost structure.

The shift is real, but it’s not automatic. It requires someone to close the gap between “the tools exist” and “my business can use them.” The businesses that figure out that packaging problem will define the next wave of AI adoption — not by pushing the frontier of what models can do, but by making what models can already do accessible, affordable, and safe for the rest of us.

Charles Chukwudozie builds agentic ai systems for enterprise and with local-first AI. ATLAS+ is an open-source agentic document processing platform powered by Ollama and LangGraph.